Walk into any customer support center in Mumbai, Bengaluru, or Delhi, and you will hear something that no textbook ever taught: a seamless, unconscious blend of Hindi and English flowing in a single breath. A caller says, "Mera order abhi tak deliver nahi hua, can you please check the status?" The sentence starts in Hindi, pivots to English, and lands back in a perfectly natural conversational rhythm. For a human agent, this is effortless. For most AI voice systems, it is still a serious technical challenge.

This is the code-switching reality of 600 million Hindi-speaking Indians who also operate in English professionally and socially. For businesses deploying AI voice agents across India, cracking this communication pattern is not a nice-to-have feature. It is the single biggest factor determining whether a customer feels heard or frustrated, whether a call resolves in 90 seconds or escalates to a human, and whether an AI calling investment delivers genuine ROI or disappoints at scale.

This guide breaks down exactly how advanced AI voice agents are engineered to handle Hindi-English code-switching, why older generation bots fail at it, and what enterprises must look for when choosing a multilingual AI call platform for the Indian market.

What Is Hindi-English Code-Switching and Why Does It Matter for Business

Code-switching is the linguistic practice of alternating between two or more languages within a single conversation, a sentence, or even a single phrase. In India, Hindi-English code-switching, commonly called Hinglish, is not slang or a sign of incomplete fluency. It is the natural, dominant communication mode for hundreds of millions of urban and semi-urban Indians across every socioeconomic segment.

For businesses, this matters enormously because customer calls are not sanitized scripts. They are real, messy, emotionally loaded conversations. When an AI voice agent fails to understand a mid-sentence language switch, it either repeats a generic clarification prompt ("Sorry, I did not understand, could you please repeat?") or worse, responds in only one language, which immediately signals to the caller that the system is inadequate. Research consistently shows that customers who feel misunderstood by an automated system are far more likely to demand human transfer, abandon the call, or lose trust in the brand.

An AI calling platform that genuinely handles code-switching does not just improve call resolution rates. It transforms customer perception of the brand by making automation feel personal, local, and genuinely intelligent.

Why Standard AI Voice Agents Fail with Indian Conversations

Most AI voice platforms were built on English-first architectures, then adapted for other languages as secondary overlays. This approach creates structural weaknesses that become catastrophic in a Hinglish environment.

The first failure point is monolingual ASR (Automatic Speech Recognition). A conventional voice bot trained primarily on English or standard Hindi cannot reliably transcribe a sentence that switches mid-phrase. When it encounters "Bhai, my account ka password reset nahi ho raha," it either misinterprets "account ka" as noise or loses the thread entirely, producing a fragmented or incorrect transcript that breaks every downstream NLP process.

The second failure point is intent recognition. Even if a system partially transcribes a mixed-language sentence, its natural language understanding model must correctly identify the caller's intent. Models trained on single-language datasets struggle with hybrid constructions. "Yeh wala plan upgrade karna hai, what's the best option?" requires the system to understand a desire for a plan upgrade (stated in Hindi grammar with English words) and a request for a recommendation (stated in English). If the intent model is not trained on Hinglish patterns, it will miss the full request.

The third failure point is response generation. A system that detects Hindi input responds in Hindi. A system that detects English input responds in English. But when the caller is actively mixing both, a response in only one language feels jarring and unnatural. The caller loses confidence in the system immediately.

The fourth failure point is accent variability. Hindi speakers from Uttar Pradesh, Rajasthan, Gujarat, and Maharashtra each bring distinct phonetic patterns to their English words. The word "problem" might be pronounced with five different stress and vowel patterns depending on the speaker's regional background. An ASR system not trained across these regional accent variations will generate high word error rates, which cascades into complete conversation failure.

These failures are not edge cases. They represent the majority of calls in any Indian business context, which is why purpose-built multilingual AI voice agents for the Indian market represent such a significant technological and commercial opportunity.

How Modern AI Voice Agents Decode Code-Switching in Real Time

The most capable AI voice agents for Hindi-English conversations are not dual-language systems running two separate models in parallel. They are unified multilingual architectures that treat Hinglish as its own coherent linguistic domain.

Multilingual Speech Recognition Architecture

Modern Hinglish-capable AI voice agents use a single acoustic model trained on a massive corpora of natural Indian speech. These corpora include not just formal Hindi and formal English, but recorded natural conversations from customer service interactions, social media audio, call center recordings, and regional speech samples collected across multiple Indian states.

This unified acoustic model learns that the phoneme sequence in "delivery" as spoken by a Hindi speaker follows a predictable pattern that differs from the phoneme sequence as spoken by a native English speaker, but both map to the same word. Instead of forcing the incoming audio into a predetermined language bucket, the model probabilistically evaluates each phoneme against all possible words in both Hindi and English vocabularies simultaneously. This dramatically reduces word error rates on mixed-language input compared to sequential language detection approaches.

The best platforms in this space achieve ASR word error rates below 12 percent on natural Hinglish speech, a threshold that makes downstream intent parsing reliable enough for production deployment. Older multilingual approaches that run separate Hindi and English ASR models in sequence can produce error rates exceeding 30 percent on the same input.

Contextual Language Identification

Beyond phoneme-level recognition, advanced AI voice agents perform sentence-level and phrase-level language identification as part of the NLP pipeline. The system continuously updates its language probability distribution as the conversation progresses. If a caller has been speaking primarily in Hindi for the first 40 seconds of a call, the model assigns higher prior probability to Hindi interpretations for ambiguous phoneme sequences, but immediately recalibrates if an English phrase enters the stream.

This contextual language identification also serves a second function: it allows the AI agent to mirror the caller's language preferences in its responses. If a caller sends 70 percent of their speech in Hindi and 30 percent in English, a sophisticated AI voice agent will generate responses that reflect a similar ratio, making the interaction feel natural rather than algorithmically rigid.

Intent Mapping Across Language Switches

The most advanced layer in a code-switching AI voice agent is the cross-lingual intent mapping module. This component is responsible for understanding that "mera refund kab aayega, it's been 10 days" and "when will my refund come, 10 din ho gaye hain" express identical intents despite inverting the language of each clause.

Cross-lingual intent mapping uses multilingual transformer models, most commonly variants of mBERT (multilingual BERT) or XLM-RoBERTa, fine-tuned on domain-specific Hinglish conversational datasets. These models generate language-agnostic semantic embeddings, meaning they represent the meaning of a phrase in a shared vector space regardless of whether the phrase was spoken in Hindi, English, or a blend of both. Downstream intent classifiers then operate on these embeddings, making them inherently language-agnostic and dramatically more robust to code-switching.

The Hinglish Reality: What Indian Callers Actually Sound Like

Understanding the theory of code-switching is one thing. Understanding what it sounds like in practice is essential for anyone making decisions about AI voice agent deployment.

Here is a representative set of real conversational patterns from Indian customer service environments:

A banking caller says: "Mera account freeze ho gaya hai, I need to unblock it urgently, can you help?" The switch happens at the transition from problem statement to action request.

A telecom subscriber says: "Yeh data pack wala offer, what is the validity, aur kya international roaming included hai?" The inquiry starts with a reference in Hindi, asks a question in English, then adds a follow-up in Hindi.

An e-commerce customer says: "Order cancel karna tha, but ab cancel nahi ho raha, site pe error aa raha hai." Here the entire sentence stays structurally Hindi but borrows "cancel," "error," and "site" from English.

A healthcare caller says: "Doctor sahab ke saath appointment book karni hai, tomorrow morning preferred hai." The sentence is grammatically Hindi with English nouns inserted naturally.

None of these patterns are unusual or exceptional. They are the norm. Any AI voice agent deployed for Indian customer service that cannot handle all four of these patterns fluently will fail on the majority of its calls. This is not a theoretical concern. It is the operational reality that businesses learn quickly and painfully when they deploy English-only or Hindi-only bots in Indian contact center environments.

Technical Infrastructure Behind Hinglish AI Voice Agents

Building an AI voice agent that genuinely handles code-switching requires purpose-built infrastructure across three core layers.

Dual Language Model Fusion

The most effective architectures use what AI researchers call dual language model fusion. Rather than running two separate language models and routing between them, fusion systems train a single model on interleaved Hindi-English data from the ground up. The resulting model does not think in Hindi or English. It thinks in meaning, drawing from both linguistic systems simultaneously.

This approach is significantly more computationally expensive to train but dramatically more accurate in production. It eliminates the latency and error introduced by language detection as a pre-processing step, which is particularly important for voice interactions where every 200 milliseconds of additional processing delay noticeably degrades the caller experience.

Phoneme-Level Acoustic Modeling

The acoustic modeling layer in a Hinglish AI voice agent must handle phoneme inventories from both Hindi and English, as well as the hybrid phoneme patterns that emerge when Hindi speakers pronounce English words. For example, the English word "problem" is often pronounced as "problam" or "prablem" by Hindi speakers from northern India, while speakers from Maharashtra often say "problem" with a distinctive vowel pattern. A monolingual English acoustic model will fail to map these to the correct word, while a purpose-built multilingual model with regional accent training will succeed.

Leading AI voice platforms achieve this through massive data collection programs that record and label thousands of hours of Indian English and Hindi speech across regional accent groups, age groups, and socioeconomic contexts. These recordings form the training foundation for acoustic models that generalize reliably across the real Indian caller population.

Conversational Memory and Context Retention

Code-switching does not just happen at the word level. It shapes the conversational flow over entire calls. An AI voice agent handling a complex support query must maintain context across multiple language switches within the same conversation thread. If a caller establishes that they are asking about "order number 4782" in English and then three turns later refers to it as "woh wala order" in Hindi, the system must recognize that "woh wala order" refers back to order 4782 without requiring the caller to repeat the reference number.

This cross-lingual correlation resolution, the ability to track entities and references across language switches, is one of the most technically demanding features of a mature Hinglish AI voice agent. Systems that lack this capability force callers to repeatedly restate context, which is one of the primary drivers of caller frustration and negative CSAT scores.

Business Use Cases Where Hinglish AI Voice Agents Deliver Measurable ROI

The commercial case for Hinglish-capable AI voice agents is compelling across multiple Indian industry verticals. The following examples reflect the operational patterns where the technology delivers its strongest business impact.

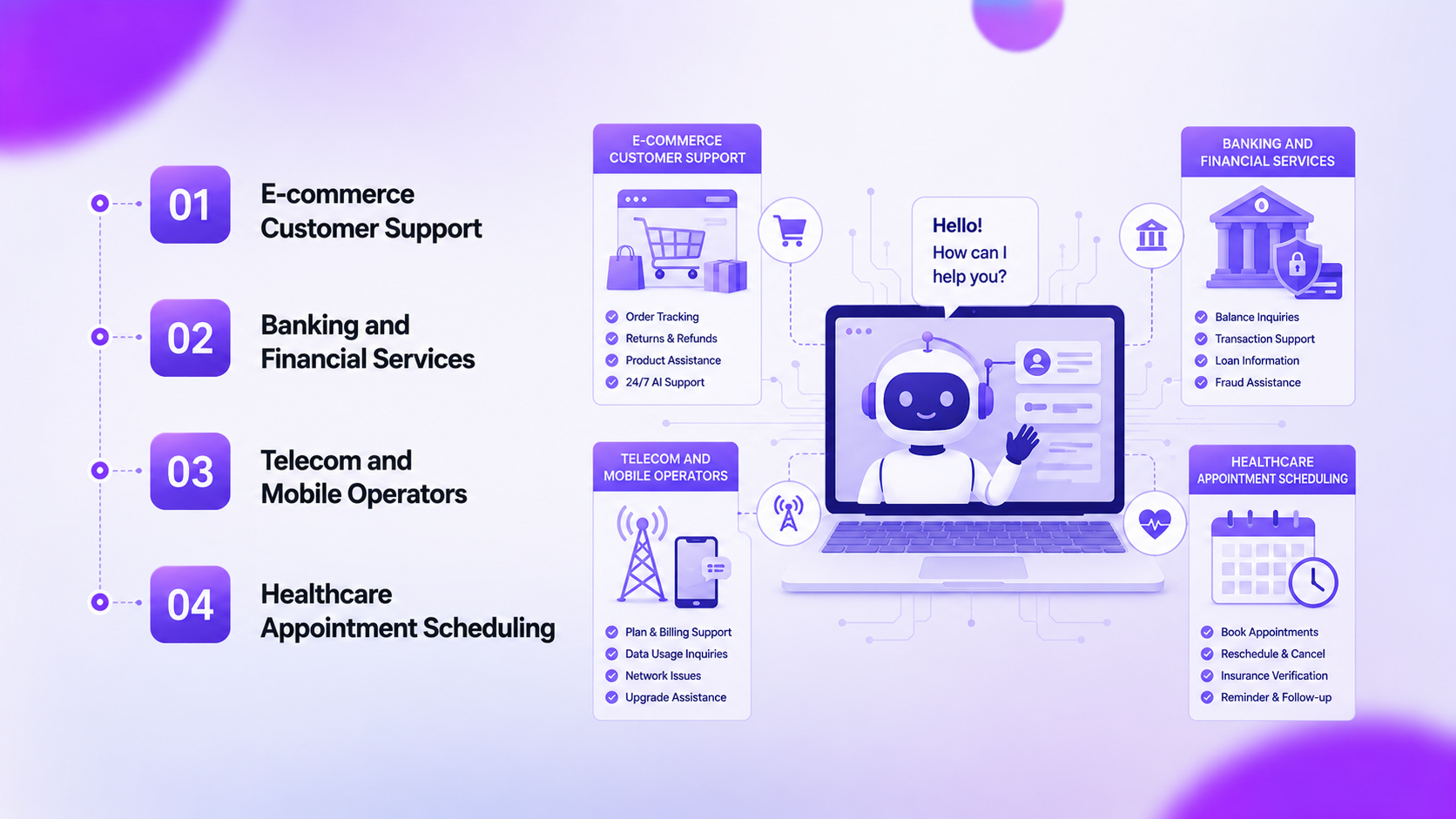

E-commerce Customer Support

Indian e-commerce generates over 400 million customer support interactions annually across platforms like Flipkart, Meesho, and thousands of D2C brands. The overwhelming majority of these interactions involve order tracking, return requests, refund follow-ups, and delivery escalations, all of which are information-retrieval tasks that AI voice agents handle with high accuracy when they can understand the caller.

A Hinglish-capable AI voice agent deployed in an e-commerce support context can resolve order status queries, process return requests, and escalate complex issues to human agents with a handoff summary, all within a 60 to 90 second call. When deployed at scale, enterprises report first-call resolution rates of 75 to 85 percent for these query categories, compared to 45 to 60 percent for English-only voice bots that struggle with mixed-language callers.

The cost per resolution for AI-handled calls runs 60 to 80 percent lower than human-handled calls in the Indian contact center market. For businesses handling 100,000 calls per month, this translates to annual savings in the range of 2 to 4 crore rupees depending on staffing costs and average handling time.

Banking and Financial Services

The BFSI sector in India manages a customer base of over 900 million account holders, many of whom prefer to interact in their regional language or in Hinglish. Common AI voice agent use cases in banking include account balance inquiries, transaction dispute initiation, fixed deposit queries, loan status updates, and credit card payment reminders.

The regulatory environment adds a layer of complexity. Banking AI voice agents must be extremely precise in understanding financial terms, account numbers, and transaction references regardless of the language in which they are communicated. A system that misunderstands "mera FD ka interest rate kya hai" as a current account balance query can create serious customer experience failures and even compliance exposure.

Banks that have deployed purpose-built Hinglish AI voice agents report containment rates of 70 percent or higher for routine tier-one queries, meaning that 70 percent of incoming calls are fully resolved without human agent involvement. This drives significant operational savings while also reducing call wait times for the minority of callers who do require human assistance.

Telecom and Mobile Operators

India's telecom sector handles the highest volume of customer service interactions of any industry in the country, driven by a subscriber base exceeding 1.1 billion connections. Plan upgrade inquiries, data pack activations, bill payment issues, SIM-related requests, and network complaint escalations collectively represent tens of millions of calls monthly.

Telecom customers in India are particularly diverse in language preference and mix. A prepaid subscriber in rural Rajasthan might speak almost entirely in Hindi with a handful of English technical terms. An urban postpaid subscriber in Hyderabad might speak primarily in English with Telugu and Hindi phrases mixed in. A Hinglish AI voice agent that handles Hindi-English switching well but misses regional language overlaps will still serve the majority of calls effectively, since Hindi-English is the dominant code-switching pattern across most Indian states.

Telecom operators using advanced AI calling platforms have achieved average handling time reductions of 40 to 50 percent for automated calls compared to human-handled equivalents, with customer satisfaction scores that meet or exceed the satisfaction scores for human-handled routine queries.

Healthcare Appointment Scheduling

The Indian healthcare sector is in the middle of a digital transformation that is being driven partly by AI-powered patient interaction tools. Appointment scheduling, prescription refill reminders, diagnostic report notifications, and doctor availability queries are all routine tasks that AI voice agents can handle effectively.

Hospitals and clinic chains that have deployed AI voice agents for appointment management report a 30 to 40 percent reduction in missed appointments due to proactive reminder calls, and a 50 percent reduction in the staffing requirement for their front desk operations.

Implementation Framework: Deploying a Code-Switching AI Voice Agent

Deploying a Hinglish AI voice agent is not simply a matter of switching on a vendor's standard multilingual module. A structured implementation framework is essential for achieving production-quality results.

Audit Your Actual Call Data Before selecting a platform or building conversation flows, analyze a representative sample of your existing customer calls. Identify the actual language patterns your callers use, the specific vocabulary domains they draw from, and the intent categories that represent the highest call volume. This audit will reveal exactly which Hinglish patterns your AI voice agent must handle and will serve as your ground truth for training and testing.

Define Intent Architecture for Both Languages Build your intent taxonomy in a language-agnostic manner from the start. For each intent your AI voice agent must handle, document representative phrases in pure Hindi, pure English, and Hinglish mixing patterns. This multilingual intent library becomes the foundation for training your NLP model.

Select a Platform with Native Hinglish Support Evaluate AI voice agent platforms on their specific performance with Hinglish input. Request test calls using your actual call audit samples. Measure word error rates on mixed-language input, not just on clean monolingual benchmarks. Many vendors claim multilingual capability but deliver it through bolt-on language detection that performs poorly on natural code-switching.

Training on Domain-Specific Data Generic multilingual training is insufficient for most business contexts. Fine-tune your chosen model on domain-specific data, meaning real or realistic transcripts from your industry. A banking AI voice agent trained on general Hinglish speech will perform significantly worse than one additionally fine-tuned on financial service call transcripts in Hinglish.

Run Layered Testing Before Launch Test your AI voice agent across multiple dimensions: regional accents within Hindi, different code-switching ratios (high Hindi, balanced, high English), different caller demographics, and different emotional states including frustration and urgency. These test conditions should reflect your real caller population as closely as possible.

Monitor, Retrain, and Optimize Continuously Deploy with a monitoring framework that tracks intent recognition accuracy, escalation rates, and caller satisfaction by language pattern category. Use this data to identify specific code-switching patterns where your system underperforms and drive continuous retraining cycles.

Common Mistakes Businesses Make When Deploying Multilingual AI Voice Agents

Understanding what goes wrong in multilingual AI voice deployments is as important as understanding what goes right. These are the most common and costly mistakes Indian businesses make when deploying Hinglish-capable AI voice agents.

Assuming Standard Multilingual Equals Hinglish-Ready Most leading cloud-based AI platforms support Hindi and English as separate languages. Businesses assume this means they support Hinglish. They do not, not in any reliable production sense. Supporting two languages independently and handling mid-sentence switching between them are entirely different technical problems. Businesses discover this gap in the first week of live deployment when escalation rates spike and CSAT scores drop.

Skipping Regional Accent Training India is not linguistically uniform, even within Hindi. A caller from Lucknow and a caller from Jaipur will stress English words very differently. Businesses that deploy AI voice agents trained only on standard Hindi and standard English accent profiles create systems that systematically fail callers with regional accents, which is a significant portion of the Indian population.

Building Response Logic in One Language Only Many businesses build their AI voice agent's response templates entirely in English for simplicity, then add a translation layer for Hindi responses. This creates stilted, unnatural responses that immediately signal to Hinglish callers that the system does not truly understand their communication style. Naturalness in response generation requires building templates natively in the expected output register, which for most Indian business contexts means Hinglish.

Neglecting Emotion Detection Across Languages Code-switching is often emotionally driven. When a caller switches from English to Hindi mid-sentence, it frequently signals rising emotional intensity or urgency. AI voice agents that do not detect and appropriately respond to emotional signals in Hinglish will regularly mishandle escalating caller situations, failing to de-escalate frustration before it reaches the point of call abandonment or demand for human transfer.

Over-Automating Without Clear Escalation Design Businesses that are excited about cost savings from AI automation sometimes build overly restrictive escalation paths, making it difficult for callers to reach human agents when the AI cannot resolve their query. In a Hinglish environment where the AI may still struggle with edge-case linguistic patterns, a generous and clearly signaled escalation path is essential for preserving caller trust and CSAT scores during the optimization phase.

Measuring Performance: KPIs for Hinglish AI Voice Agents

Measuring the success of a Hinglish AI voice agent requires a KPI framework that goes beyond standard call center metrics.

The primary technical metrics to track include ASR word error rate specifically on Hinglish input (target below 12 percent for production-grade systems), intent recognition accuracy across code-switched utterances (target above 88 percent), and cross-lingual coreference resolution accuracy (how often the system correctly links references across language switches).

The primary operational metrics include containment rate (the percentage of calls fully resolved by the AI without human transfer, with top-performing deployments achieving 70 to 85 percent for tier-one queries), average handling time compared to human-agent baselines, and first-call resolution rate.

The primary business metrics include cost per resolution, customer satisfaction score (CSAT) measured specifically for AI-handled calls, and net promoter score trends for customer segments that primarily interact through the AI voice channel.

One often overlooked but critical metric is the language distribution of successful versus unsuccessful calls. If your AI voice agent's containment rate drops significantly for calls where the Hindi-to-English ratio exceeds 60 percent, it signals that your system's Hindi comprehension is underperforming relative to its English capability. This kind of language-segmented performance analysis guides retraining priorities with precision.

Future Trends in Multilingual AI Voice Technology for India

The trajectory of AI voice agent technology for Indian multilingual contexts is moving rapidly in directions that will make current best-in-class systems look primitive within three to five years.

The most significant near-term development is the emergence of foundation models trained natively on Hinglish at scale. As of 2024, the dominant approach for multilingual AI voice agents still involves adapting English-first foundation models with multilingual fine-tuning. Within the next two to three years, models trained from scratch on Indian language data including Hinglish, Tanglish (Tamil-English), Benglish (Bengali-English), and other regional code-switching patterns will deliver step-change improvements in naturalness and accuracy.

Real-time emotion-aware code-switching response will become a standard capability. Current systems that detect emotional signals in Hinglish speech can route to escalation or adjust response tone. Next-generation systems will dynamically adjust both the language register and the emotional register of their responses in real time, matching the caller's emotional state and language pattern simultaneously.

Regional dialect modeling will become dramatically more precise. Instead of a single "Hindi" acoustic model, AI voice platforms will maintain separate sub-models for Bihari, Rajasthani, UP-inflected, and other regional Hindi variants, each with their characteristic English pronunciation patterns, making ASR accuracy consistent across India's full regional linguistic diversity.

Voice biometrics combined with language preference profiling will allow AI voice agents to instantly recognize returning callers and load their historical language preference patterns, starting the conversation in the caller's preferred Hinglish register without any setup friction. This will dramatically improve the experience for repeat callers, who represent the majority of calls in any subscription-based business.

The integration of large multimodal models will allow AI voice agents to process not just speech but also contextual signals from digital channels, such as recent app activity, previous chat transcripts, and pending transactions, allowing the agent to proactively address likely caller intent before the caller has fully articulated their query. In a Hinglish context, this proactive intent anticipation will make the AI voice interaction feel remarkably intelligent and personal.

Conclusion

Hindi-English code-switching is not an edge case in Indian customer communication. It is the dominant conversational mode for hundreds of millions of callers across every industry and every tier of Indian business. AI voice agents that cannot handle Hinglish fluently are not multilingual solutions. They are English-first solutions with a cosmetic Hindi overlay, and Indian callers recognize the difference within the first exchange.

The businesses that will win in Indian AI voice automation are those that invest in genuinely multilingual architectures, train on real Hinglish data, design responses in natural code-switching registers, and measure performance with language-specific precision. The technology to do this well exists today. The commercial returns for those who implement it correctly are substantial and well-documented.

For any enterprise operating at meaningful call volume in the Indian market, deploying a production-grade AI voice agent built for Hinglish is not a future consideration. It is a current competitive necessity. The companies that recognize this now and build the capability will enjoy significant advantages in cost efficiency, customer satisfaction, and operational scalability over those that continue to deploy monolingual or inadequate multilingual solutions.