Let me say something most blogs won’t.

Building a real-time AI voice assistant is not hard.

Building one that doesn’t sound like a confused robot having a bad day? That’s the real challenge.

I’ve worked on voice systems that looked perfect in demos and completely collapsed when real users started talking over them, pausing mid-sentence, or switching languages halfway through a call.

And that’s the gap.

Between “it works” and “it works in reality.”

If you're here, you're probably asking:

- How do I actually build a real-time AI voice assistant?

- What tech stack should I use?

- Why do most voice bots feel… broken?

Good. You're asking the right questions.

Let’s build this properly.

How Real-Time AI Voice Assistants Work

At a high level, every real-time AI voice assistant follows a loop:

Listen → Understand → Think → Respond

Simple. On paper.

Messy. In production.

Speech-to-Text (STT)

This is where raw voice becomes text.

If your STT fails, everything fails. Period.

Modern systems use deep learning-based speech recognition AI that can handle accents, noise, and interruptions.

But here’s the catch…

Latency.

Even a 500ms delay feels unnatural in a conversation.

Natural Language Processing (NLP)

Once the system has text, it needs to understand intent.

Not keywords. Intent.

There’s a difference between:

- “I want to cancel my subscription”

- “I think I’m done with this service”

Same meaning. Different words.

This is where NLP voice assistants shine or crash.

Text-to-Speech (TTS)

Now your AI needs to speak back.

And this is where most systems lose trust.

Because users instantly detect robotic voices.

Human-like AI conversations depend heavily on:

- Tone

- Pauses

- Emotional cadence

Miss those… and your assistant sounds fake.

Response Generation (LLMs)

This is the brain.

Large Language Models generate responses dynamically instead of relying on rigid scripts.

But here's a blunt truth:

If you don’t control the responses properly, your AI will hallucinate.

Yes. Even in voice.

Real-Time Streaming Architecture

This is the invisible hero.

Instead of waiting for full sentences, modern systems stream:

- Audio in chunks

- Partial transcriptions

- Incremental responses

This reduces delay and creates natural flow.

Without streaming… your assistant feels slow. And users hang up.

Core Technologies Required

Let’s get practical.

AI Models (LLMs, ASR, TTS)

- ASR (speech recognition AI)

- NLP/LLMs for understanding

- TTS for voice output

You need all three working together in sync.

APIs & Frameworks

You’re not building everything from scratch (unless you hate sleep).

APIs handle:

- Speech processing

- Language understanding

- Voice synthesis

WebRTC / VoIP Systems

This is how real-time audio travels.

Without low-latency communication protocols like WebRTC… your “real-time” system isn’t real-time.

Cloud Infrastructure

Voice AI is resource-heavy.

You’ll need:

- Scalable compute

- Low-latency servers

- Global availability

Otherwise, your AI call agent in India won’t work smoothly for global users.

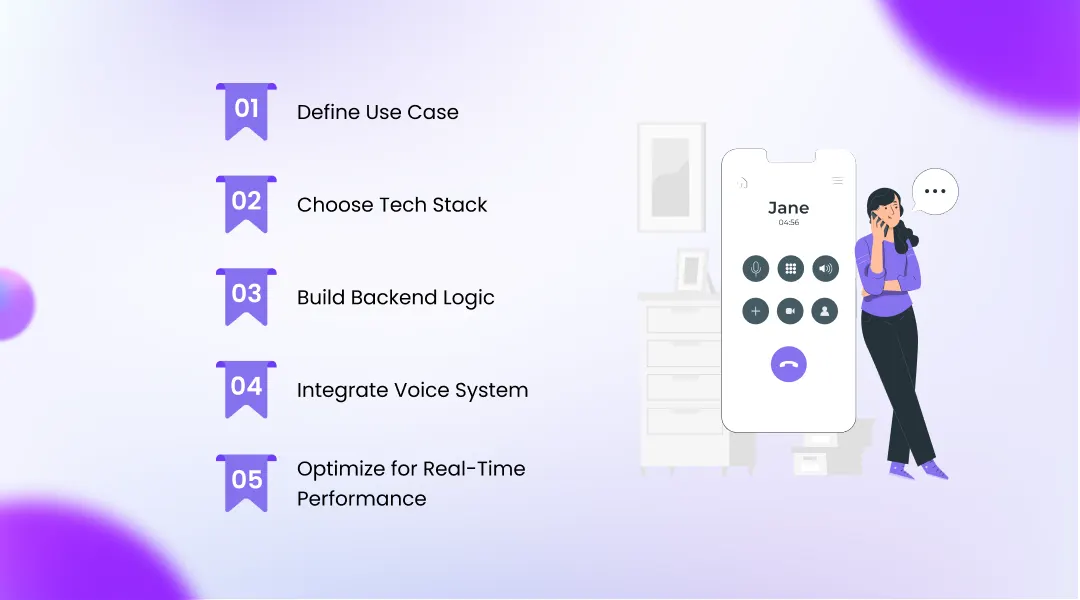

Step-by-Step Guide to Build AI Voice Assistant

Let’s build this step by step.

No fluff.

Step 1: Define Use Case

This is where most people mess up.

They start with tech instead of purpose.

Ask yourself:

- Customer support?

- Sales calls?

- IVR replacement?

Because building AI Call Assistants for support is very different from building one for sales.

Step 2: Choose Tech Stack

Pick wisely.

Your stack defines your system’s limits.

- STT tools → Whisper, Google STT

- NLP engine → LLM APIs

- TTS providers → realistic voice engines

Want Low-Cost AI Voice Assistants?

Then optimize here. Not later.

Step 3: Build Backend Logic

This is the brain wiring.

You’ll need:

- Intent recognition

- Conversation flow management

- Context memory

Here’s a question most people ignore:

What happens when the user says something unexpected?

If you don’t handle that… your system breaks.

Step 4: Integrate Voice System

Now connect your AI to actual calls.

Using:

- Twilio

- WebRTC

This is where your assistant becomes a real AI phone answering system in India.

Step 5: Optimize for Real-Time Performance

This is where pros separate from beginners.

You need:

- Fast inference

- Streaming responses

- Minimal API latency

Even 1-second delay = bad experience.

Users don’t wait.

They hang up.

Best Tools & Platforms in 2026

Here’s what’s actually working right now:

- OpenAI / Whisper → speech recognition

- Google Speech-to-Text → scalable STT

- ElevenLabs → realistic TTS

- Twilio Voice API → call handling

I’ve tested most of these.

They work. But only if integrated properly.

Real-Time Architecture Explained

Let’s simplify this.

A real-time voice AI system looks like this:

User speaks → Audio stream → STT → NLP/LLM → Response → TTS → Audio output

All happening in milliseconds.

The key is a low-latency pipeline.

(And yes… this is where most systems fail.)

Because APIs + network delays + processing time = lag.

Fixing that requires:

- Edge computing

- Smart caching

- Streaming architecture

Use Cases of AI Voice Assistants

This isn’t theory. This is already happening.

Call Center Automation

Replacing repetitive support calls with AI customer support automation.

AI Sales Calls

Outbound calls that qualify leads and book meetings.

Appointment Booking

Healthcare, salons, services fully automated.

Customer Support

Handling FAQs, complaints, and requests 24/7.

And the biggest shift?

Businesses are moving from static IVR systems to conversational AI voice agents.

Challenges & Solutions

Let’s not pretend this is easy.

Latency Issues

Problem: Delays kill conversations Solution: Streaming + optimized APIs

Voice Accuracy

Problem: Misunderstanding users Solution: Better training + fallback logic

Multilingual Support

Especially critical for India.

Your AI call agent India solution must handle:

- Hindi

- English

- Regional languages

Cost Optimization

Here’s the painful truth:

Voice AI can get expensive fast.

Solution?

- Efficient API usage

- Hybrid models

- Smart scaling

Future of Voice AI in 2026 & Beyond

Let me be blunt.

Call centers as we know them are slowly disappearing.

AI-driven communication is taking over.

We’re moving toward:

- Emotion-aware voice assistants

- Human-like AI conversations

- Fully autonomous voice agents

But here’s the twist…

The winners won’t be the ones with the best tech.

They’ll be the ones who understand human conversation best.

Conclusion

If you take one thing from this guide, let it be this:

Real-time AI voice assistants are not a tech problem.

They’re a human experience problem disguised as technology.

Build for speed. Design for humans. Test in real chaos—not perfect demos.

That’s how you win.