Let me start with something blunt.

Most “AI voice agents” you see online? They’re glorified IVR systems wearing a fresh coat of AI paint.

I’ve built these systems. I’ve watched them fail in production. I’ve seen customers hang up because the bot couldn’t handle a simple interruption.

And I’ve also seen the opposite. Voice agents that felt… real. Fluid. Helpful.

That difference? Architecture. Not hype.

So if you’re here to understand how to build AI voice agent systems in 2026, I’m not giving you theory. I’m giving you what actually works.

What is an AI Voice Agent?

An AI voice agent is a system that can listen, understand, think, and respond using natural speech in real time.

Not menus. Not “Press 1 for support.”

Actual conversation.

Chatbot vs Voice Agent

Let’s not confuse the two.

- Chatbots: Text-based, slower, forgiving

- Voice Agents: Real-time, interrupt-driven, zero patience from users

Here’s the uncomfortable truth: Voice is harder. Much harder.

Why? Because humans don’t wait.

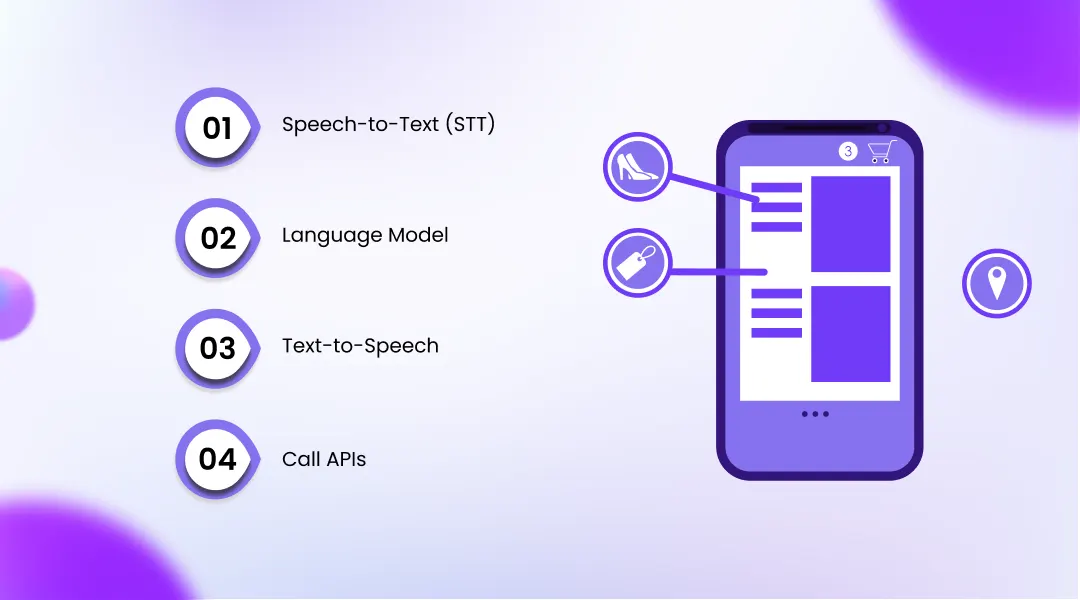

Key Components

Every AI voice agent has three core layers:

- Speech-to-Text (STT): Converts voice into text

- Language Model (LLM): Understands and generates responses

- Text-to-Speech (TTS): Converts text back into voice

Miss one piece or optimize it poorly and the entire experience collapses.

How AI Voice Agents Work

At a high level, it looks simple:

Voice Input → Processing → Response

But under the hood? It’s chaos. Beautiful chaos.

Real-Time Pipeline

Here’s what actually happens:

- User speaks

- Audio is streamed (not uploaded later—streamed live)

- STT transcribes in milliseconds

- LLM processes intent

- Response is generated

- TTS converts it back to speech

- Audio is played instantly

All of this needs to happen in under 1–2 seconds.

Let me ask you something Would you wait 5 seconds for a reply on a phone call?

Exactly.

AI Voice Agent Architecture (2026)

This is where most people get lost. Or worse oversimplify.

Let’s break it down properly.

Speech-to-Text (STT)

This layer captures voice and converts it into text.

What matters:

- Accuracy in noisy environments

- Real-time streaming

- Multi-language support

Language Model (LLM)

This is the brain.

It decides:

- What the user wants

- What to say next

- How to say it

In 2026, LLMs are context-aware, memory-enabled, and capable of handling multi-turn conversations.

But here’s the catch They’re only as good as your prompt design and system logic.

Text-to-Speech (TTS)

This is where personality lives.

Bad TTS? Your agent sounds robotic. Good TTS? It feels human.

Subtle pauses. Tone variation. Emotion.

That’s what users notice.

Call APIs (Telephony Layer)

You need infrastructure to handle calls.

This includes:

- Call routing

- Webhooks

- Real-time audio streaming

Without this, your “AI agent” is just a demo.

Tools & Technologies You Need

Let’s get practical.

LLMs

- OpenAI APIs

- Other enterprise-grade LLM providers

Speech-to-Text Tools

- Whisper-based systems

- Real-time transcription APIs

Text-to-Speech Tools

- Neural voice engines

- Emotion-aware TTS platforms

Telephony APIs

- Call handling platforms

- Voice streaming infrastructure

Here’s what most guides won’t tell you:

It’s not about picking the “best” tool. It’s about making them work together without latency spikes.

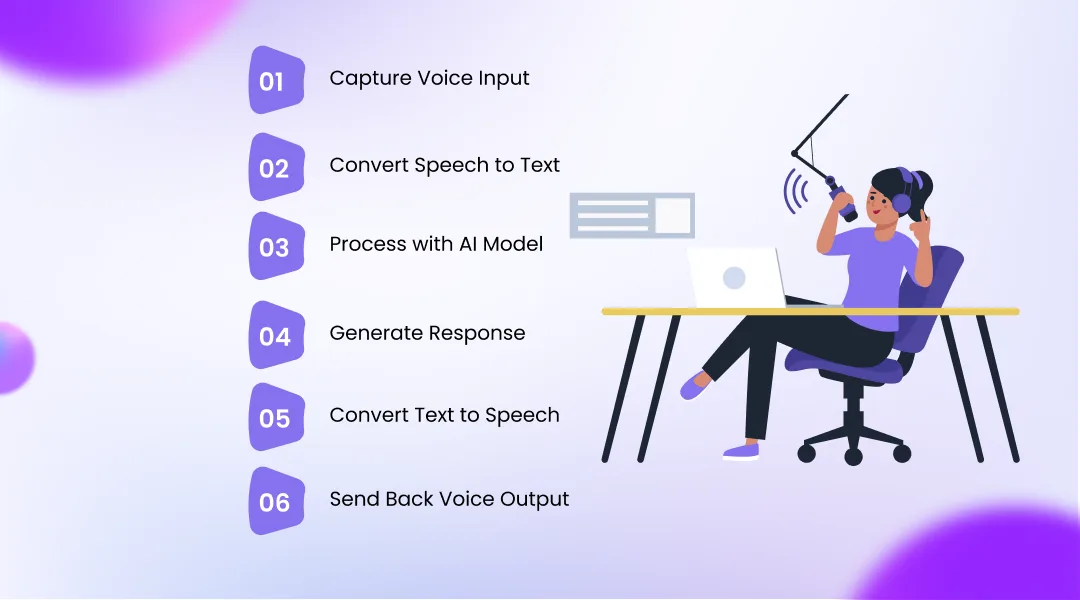

Step-by-Step Guide to Build AI Voice Agent

Now let’s build.

Step 1: Capture Voice Input

Use a telephony or WebRTC system to capture live audio.

Step 2: Convert Speech to Text

Stream audio into an STT engine.

Don’t batch it. Stream it.

Step 3: Process with AI Model

Send text to your LLM.

Add:

- Context

- Memory

- Business logic

Step 4: Generate Response

The LLM creates a response.

This is where tone matters.

A lot.

Step 5: Convert Text to Speech

Feed response into TTS.

Optimize for:

- Natural pauses

- Low latency

Step 6: Send Back Voice Output

Stream audio back to the user.

Not after processing. During.

That’s the difference between good and great systems.

Building a Real-Time AI Voice Agent (Advanced)

This is where things break.

Latency Optimization

- Use streaming everywhere

- Reduce API round trips

- Cache intelligently

Streaming Responses

Don’t wait for full sentences.

Start speaking while generating.

Yes, it’s tricky. But it changes everything.

Interrupt Handling

Real humans interrupt.

Your AI should too.

If your system can’t handle interruptions, it’s not a voice agent it’s a talking script.

Use Cases of AI Voice Agents

Let’s make this real.

Customer Support

Automate repetitive queries. Reduce load.

Sales Calls

Qualify leads. Book demos.

Appointment Booking

Healthcare. Services. Scheduling.

Lead Qualification

Filter serious prospects from noise.

If you’re exploring AI Voice Agents for Call Centers, this is where the real ROI starts to show.

Benefits for Businesses

Cost Reduction

Fewer human agents. Lower operational cost.

24/7 Availability

No shifts. No downtime.

Human-Like Interaction

Better engagement. Higher satisfaction.

And yes this is why companies are aggressively adopting the best AI Voice Agents today.

Challenges & Limitations

Let’s not pretend it’s perfect.

Voice Latency

Even slight delays ruin experience.

Accuracy Issues

Accents. Noise. Context loss.

Still a problem.

Multi-Language Complexity

Handling Hindi, English, Hinglish… properly?

Not trivial.

(Not even close.)

Future of AI Voice Agents (2026 & Beyond)

This is where things get interesting.

Emotion-Aware AI

Detecting tone. Responding accordingly.

Autonomous Agents

Less human intervention. More decision-making.

Hyper-Personalization

Agents that remember users across interactions.

I’ve tested early versions of this.

It’s impressive. And slightly unsettling.

Conclusion

If you’ve made it this far, you already know

Building an AI voice agent isn’t about plugging APIs together.

It’s about designing a system that behaves like a human conversation.

And that’s hard.

But it’s also where the opportunity is.

Companies like OnDial are focusing on exactly this building voice systems that don’t just work, but actually feel right. And that’s the real benchmark.

Not functionality. Experience.